When Clarity Becomes Immoral (#34)

What your writing makes easier—and for whom

Writers love the fiction of neutrality.

It flatters us.

It lets us imagine that we stand outside the machine with clean hands, translating complexity for whoever needs help. We are not the architect. We are not the executive. We are not the one setting the incentives, designing the dark pattern, or approving the surveillance model.

We are just the ones explaining how it works.

Just the writers.

Just the docs people.

Just doing the job.

But that fiction collapses the moment your writing starts making a harmful system easier to use, easier to scale, or easier to excuse.

Clarity is not automatically moral.

Clarity transfers power.

The moment you explain something clearly, you increase someone’s ability to act. Sometimes that is good. Sometimes it is generous. Sometimes it is the difference between a frustrated user and a capable one.

And sometimes it is something uglier.

If your work removes friction from abuse, you are not merely describing the machine.

You are helping it run. You are oiling it.

So the real question is not, “Am I just doing my job?”

It is not even, “Did I build the harmful system?”

It is this:

At what point does explanation stop serving the user and start serving the harm?

Clarity is not one thing

Writers talk about clarity as if it were a single virtue.

It isn’t.

There are levels to it, and pretending otherwise is one of the ways we avoid responsibility.

The first level is descriptive clarity. This is simple explanation.

What is the thing?

What does this system do?

What does this term mean?

You are helping someone see what is there. Neutral.

The second level is operational clarity. You are helping someone use the tool.

Click here.

Configure this.

Set the parameter.

You are increasing capability. Again, neutral.

The third level is strategic clarity. Strategic clarity helps someone use the system more persuasively, more profitably, or with less resistance.

It does not merely help someone operate the machine. It helps them optimize it.

It smooths perception.

It anticipates objections.

It softens the language around the ugly parts.

It teaches people how to keep the machine running without alarming the people trapped inside it. That is no longer neutral.

Consider the differences:

Explain what ad targeting is: descriptive.

Explain how to configure tracking pixels: operational.

Explain how to maximize behavioral capture while softening the language around surveillance: strategic.

When strategic clarity becomes complicity

The moral line is crossed earlier than people want to admit.

Most people only feel comfortable discussing ethics when the example is cartoonishly evil.

Genocide.

Forced labor.

Historical atrocity.

That is convenient.

If the standard is catastrophe, most white-collar professionals get to go home feeling innocent. Their work may be manipulative, extractive, or deceptive, but at least it isn’t that.

This is moral cowardice disguised as sophistication.

You do not need an extreme case to see where the problem begins.

It begins with strategic clarity.

Strategic clarity does not merely help someone use a system.

It helps them use it without resistance.

You see it in onboarding flows that teach teams how to increase “engagement” through variable rewards and compulsive loops that look suspiciously like slot-machine mechanics.

You see it in documentation that helps growth teams harvest more behavioral data while calling it “personalization.”

You see it in consent-flow guidance that explains how to improve opt-in rates by making refusal less visible, less convenient, less legible.

By the time the writer says, “I’m not hurting anyone. I’m just explaining the feature…” the real work has already been done.

Because strategic clarity often depends on something else: euphemism.

Writers like to believe the ethical problem lives in the system itself. Yet, sometimes it lives in the nouns:

Surveillance becomes signal enrichment.

Manipulation becomes friction reduction.

Compulsion becomes engagement optimization.

Data extraction becomes experience personalization.

Deception becomes streamlining the user journey.

This is not cosmetic language. It is moral deodorant.

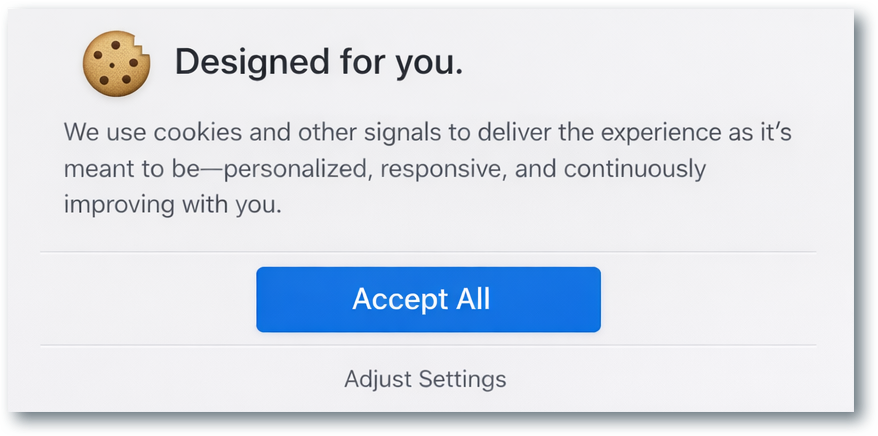

A euphemistic pop-up cookie consent window

Euphemism does not just soften reality for the user. It softens it for the writer. Once the language is cleaned up, the work no longer feels like harm. It feels like optimization.

Language does not merely report reality. It frames it. It determines what is seen clearly and what remains obscured.

A euphemism places distance between an action and its human meaning. Once that distance exists, people can keep working.

Upsells move faster.

The documentation looks cleaner.

The system becomes easier to defend.

Writers are particularly dangerous here because we specialize in removing friction from language. We know how to make something sound coherent, reasonable, and inevitable.

We can make an ugly process feel normal or make a predatory workflow read like a standard operating procedure.

That is a professional skill.

It is also a moral liability.

Because the more elegantly you disguise harm, the more implicated you become in it.

Examining the morality of clarity

“I’m just the writer.”

It is one of the most reliable refuges in the profession.

And to a point, it is true. Writers do not design every system they document. They do not control the incentives behind it. They are rarely the ones deciding how aggressively a tool will be deployed.

The relevant consideration is whether our labor makes a system easier to use, harder to resist, or easier to excuse.

We already understand this principle when the outcome is positive. We argue that documentation improves adoption, clarity reduces support costs, and good writing builds trust and helps systems scale.

Fine.

Then we cannot claim writing is powerless when it serves something harmful. That is not neutrality.

It is self-protection.

So when the ethical tension appears, the writer needs a test.

Ask yourself:

Who benefited from the friction I removed?”

If clarity makes legitimate work easier, it is serving the user.

If clarity makes manipulation, surveillance, deception, or exploitation easier, it is harming the user.

That distinction changes how a great deal of professional writing begins to look.

Some “best practices” are techniques for making unacceptable systems feel administratively normal.

Some “clear communication” is enablement.

What your clarity serves

Writers like to imagine themselves as translators standing outside power.

Often they are not outside it at all.

Often they are inside it—refining its language, smoothing its edges, and helping it travel farther than it could have gone on its own.

That is why clarity alone is not enough.

A sentence can be clean and still corrupt.

A document can be useful and still mislead.

A writer can be precise and still be morally compromised.

The real question is not whether your prose is effective.

The question is what your effectiveness serves.

If your writing helps people see clearly, act honestly, and accomplish legitimate work, clarity is a gift.

But if your writing helps manipulation, surveillance, or deception operate more smoothly and encounter less resistance, your sentences are not innocent.

They are accomplices helping the machine deceive its users.