A Working Product with a Broken Contract (#38)

When the writer is forced to make the product make sense

Modern software teams are very good at proving the part they were assigned works. The designer points to the screen and says the interface matches the mockup. The frontend engineer demonstrates the component renders. The backend engineer shows the endpoint responds. The PM confirms from the ticket that the acceptance criteria were met. QA verifies the expected path passes.

Everyone can be right.

And the product can still be lying. The interface suggests one thing. The system does another. And the output gives the user just enough confidence to believe a promise was honored.

A field says: Add additional instructions. The user adds additional instructions. The output looks aligned.

The team moves on.

No one asks:

Did the system honor the contract it made with the user?

And increasingly, that’s the question technical writers are left to answer.

The Contract Behind the Interface

A software product is full of promises.

Some are explicit. A button says Delete. A field says Additional information. A toggle says Enable. A status says Synced.

Some are implied. If a user enters instructions into a field, the user assumes those instructions influence the result. If a workflow can be configured, the user assumes the configuration changes behavior. If an AI tool invites specificity, the user assumes specificity matters.

Each assumption is a contract.

A feature begins as a clean use case. The PM frames the value. The designer builds the flow. The executive sees the demo and approves it. Engineering implements the defined behavior. QA validates the expected path.

Everything checks out. Yet the contract hasn’t been validated.

The team validated the happy path—a controlled scenario where the feature works.The happy path is not fake. It’s just incomplete.

It is, in many ways, a sales artifact.

It shows the product working under ideal conditions, with aligned assumptions, clean inputs, and cooperative behavior.

Users do not operate under ideal conditions. They arrive with partial knowledge, messy data, and vague intent.

They click things in the wrong order.

They over-specify.

They under-specify.

They assume the product does what it says.

And this is the moment the contract is tested.

Because the user does not experience the product in layers. They experience it as one continuous claim.

They do not care that:

the frontend passed the value.

the backend accepted the request.

the model returned something plausible.

They care that the thing they were led to expect did not happen.

That gap—between claimed behavior and lived behavior—is where the contract breaks.

And it is precisely the gap most teams are not structured to see.

The Assembly Line Came for Product Teams

This problem is not new. It is just wearing better software.

Modern software-as-a-service (SaaS) development follows the logic of industrial specialization. Work is broken into discrete parts. Ownership is assigned. Tasks move across the line. Each person contributes to a piece of the whole without necessarily holding the whole in view.

The assembly line made production efficient. It also created a durable instinct: divide the labor, optimize the part, increase throughput.

In the SaaS world, a feature moves from idea to design to implementation to validation. By the end, it has passed through enough specialized hands that no one is responsible for the behavior of the system as a whole.

This division of labor is necessary. Complex products require depth, focus, and clear boundaries.

But every system optimized for specialization creates a predictable blind spot: what happens between the parts.

That is where the user lives.

The user does not experience the product in layers. They experience the transitions, the seams, the places where assumptions meet reality.

And most teams are too busy polishing their layers to inspect those seams.

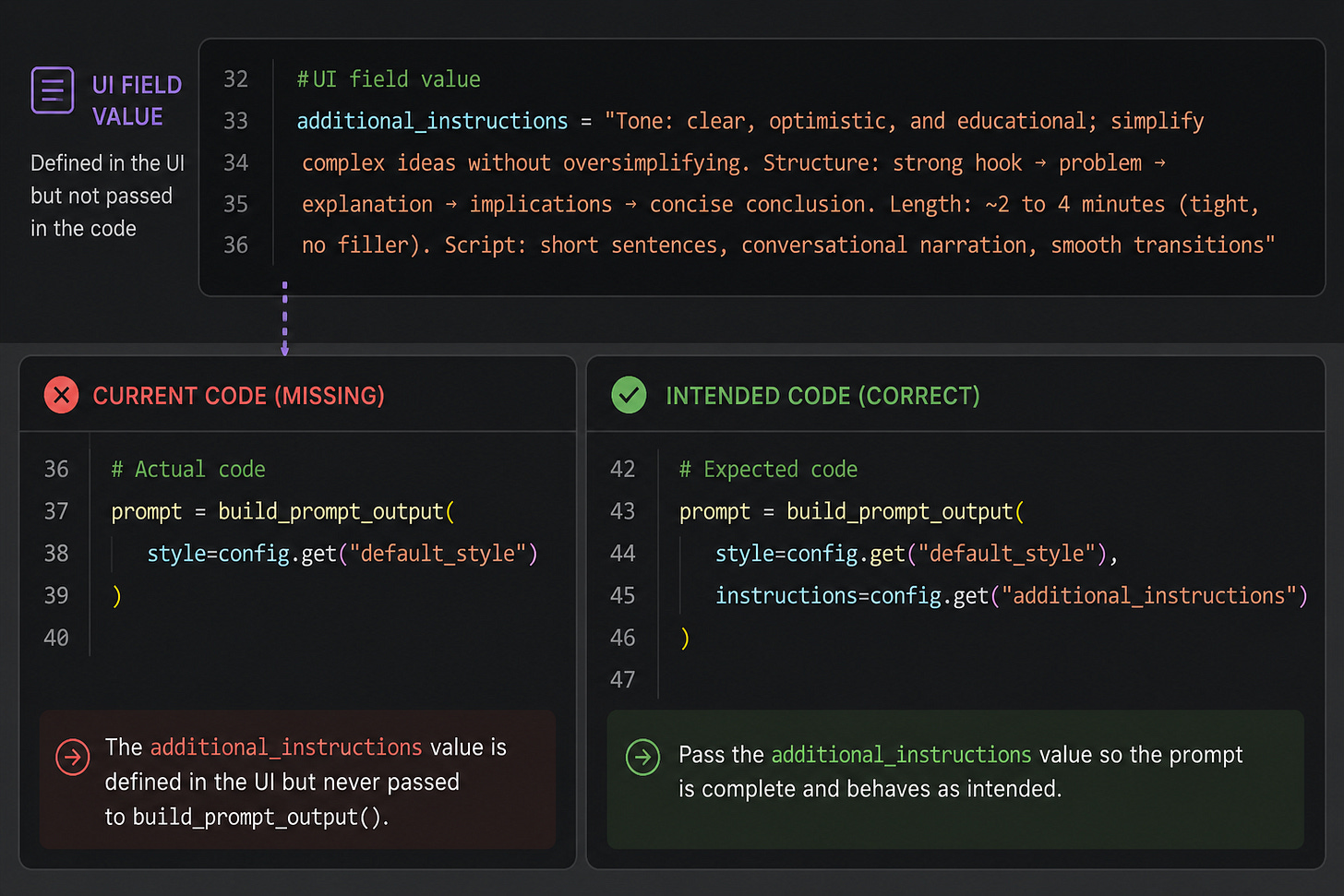

The AI Field That May Not Have Mattered

I was documenting an AI content generation feature.

In the UI, under the Prompt section, there was a field labeled Additional information. The assumption was obvious: whatever I entered there should influence the generated output.

So I tested the field to understand it well enough to explain it.

I started with a detailed prompt—tone, structure, length, style. Clear, optimistic, educational. Strong hook. Clean progression. Conversational delivery.

The output came back.

Then I simplified the additional information. Stripped it down. Different tone. Different structure.

The output came back again.

There wasn’t a meaningful difference.

Not broken.

Off.

That distinction matters.

Because “off” is easy to dismiss. It’s not a loud failure. It’s something you can explain away—model variance, prompt sensitivity, expected behavior, “AI being AI.”

But I’ve learned to pay attention to off.

So I asked the engineer: what kind of prompt is this field actually expecting?

He walked me through how the system was structured—what it was already doing by default. My input looked like it was working because it already aligned with that behavior.

But it didn’t explain why changing the prompt had no effect on the output.

So he checked the code.

And that’s when it became clear: the Additional information field wasn’t being passed into the generation logic at all.

The UI field accepted input.

The code didn’t pass it into the logic.

That’s the work.

Not just polishing language.

Not just shipping a help article.

I was testing whether the system honored its own contract.

The UI said it mattered.

The code proved it didn’t.

Tooling Makes the Blind Spot Worse

The rise of AI-assisted development has intensified this problem.

Not because the tools are ineffective. They are clearly useful. Research from GitHub shows that Copilot-assisted code can improve functional correctness, readability, and maintainability in controlled conditions.

The issue is not whether AI helps engineers ship.

It does.

The issue is what happens when faster shipping becomes a substitute for fuller verification.

Modern development is saturated with signals of correctness.

A unit test validates a unit. An integration test validates a defined interaction. A model evaluation checks selected outputs. A dashboard confirms the system did something measurable.

All of it builds confidence—within scope.

None of it answers the broader question:

Does the product behave the way the user believes it does?

That question is too cross-functional for a test case and too interpretive for most tools.

Traditional software fails in binary ways: a setting saves or it doesn’t.

AI systems fail differently. They may partially honor a request—or ignore it entirely while producing something plausible enough to pass a casual review.

That’s the trap.

The output looks right. The system appears to be working. The signals all align.

But nothing proves the system actually listened.

Teams mistake coincidence for causality.

This is the new failure mode: plausible success masking actual neglect. A plausible output lets the team move on while the system quietly ignores the user.

When the Question Changes

Technical writers do not replace formal evaluation, QA, or engineering validation. But we often perform a kind of qualitative trust check that those signals miss.

In addition to “how do I explain this?”, we have to ask “is this true?”

That question doesn’t get answered abstractly. It gets answered by working the system—by following the friction, pressing on the gaps, and forcing the behavior to reveal itself.

That work isn’t random.

In practice, it looks like this:

Identify — You spot the misalignment. The system produces an outcome that doesn’t match what the interface or workflow implies.

Hypothesize — You turn “this feels off” into a testable suspicion about how the system is actually behaving.

Interrogate — You apply pressure. You trace the input, vary conditions, and ask what actually influences the outcome.

Isolate — You separate signal from noise. You determine how the system actually behaves—whether incomplete, scoped, or misaligned with the interface.

Correct — You force alignment. You work with the team to ensure the UI reflects the behavior, the behavior matches the contract, and the documentation describes it without caveat.

This is how you close the gap between what the system says and what it does.

Outside the Layers

Teams build in parts. Each person focuses on a scoped layer. The component renders. The endpoint responds. The test passes. From inside those layers, everything looks correct.

But the product isn’t experienced in layers.

The work requires stepping outside that view. That’s where the technical writer operates. To explain the system honestly, you have to move through the entire workflow—start where the user starts, follow what they see, and decide whether it holds together.

You’re the first one trying to describe the system as a single, coherent experience.

And when it doesn’t hold together, you don’t get to ignore it.

At that point, the work isn’t describing the product.

It’s making sure the product, end to end, does what it says it does.